Convex optimization

Obituaries: Harold Kuhn (1925–2014) —

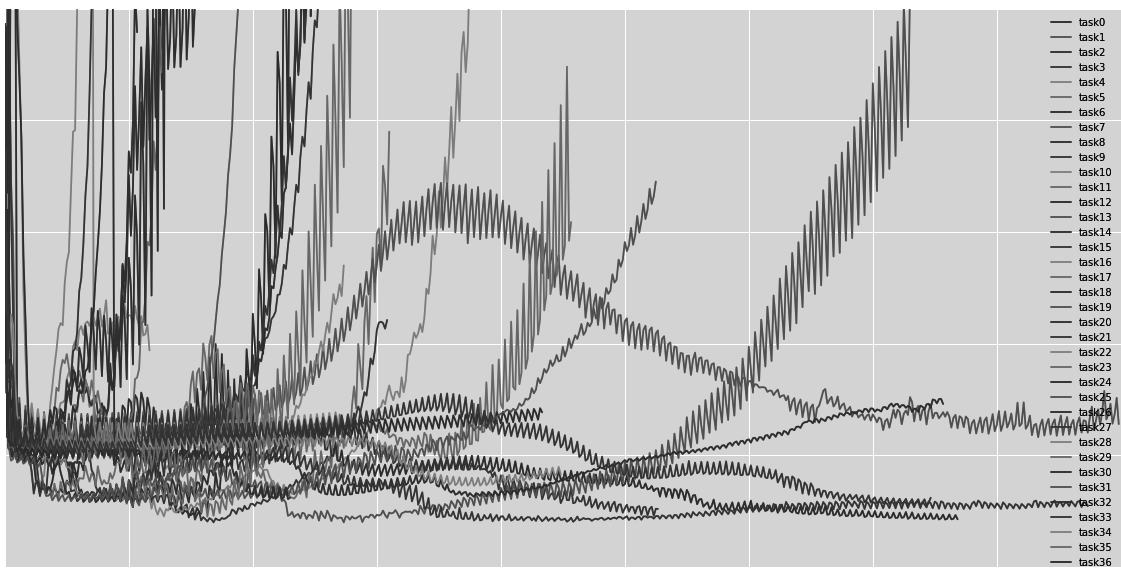

Accelerated first-order methods for regularization — FISTA

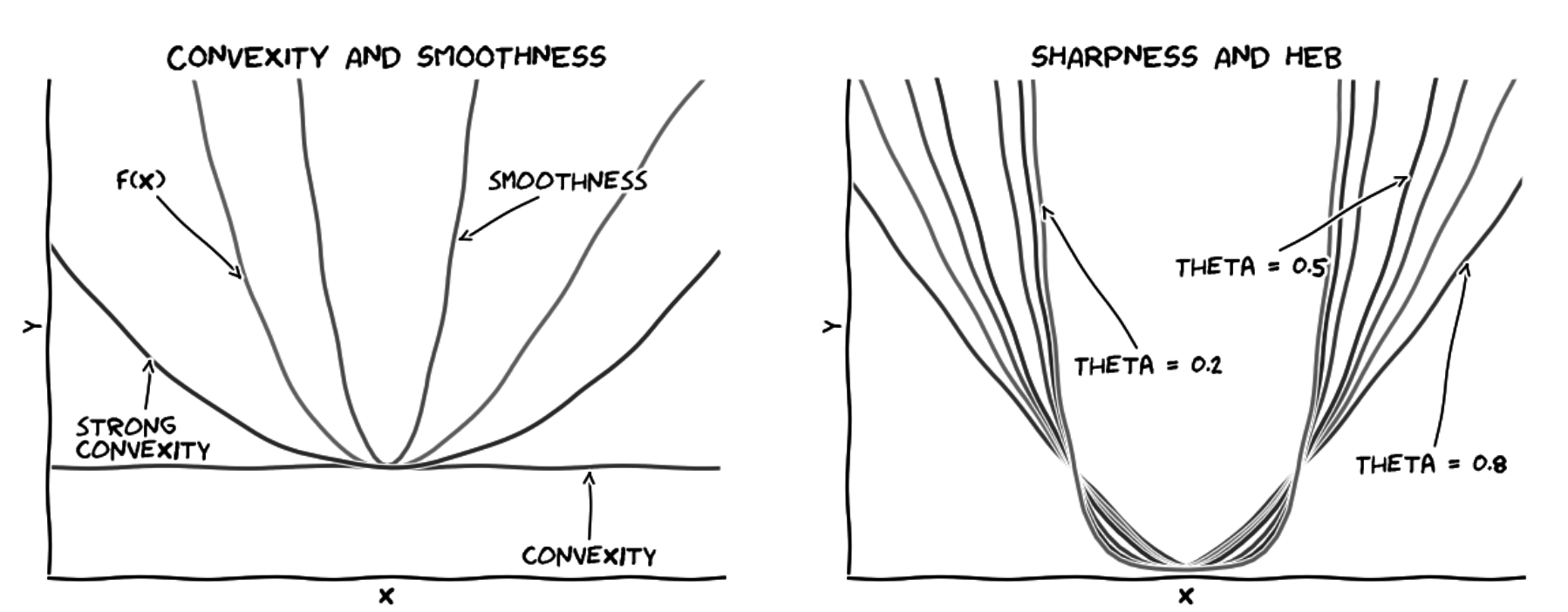

Accelerating first-order methods — The lower bound on the oracle complexity of continuously differentiable, $\beta$-smooth convex function is $O(\frac{1}{\sqrt{\epsilon}})$ [Theorem 2.1.6, Nesterov04; Theorem 3.8, Bubeck14; Nesterov08]. General first-order gradient

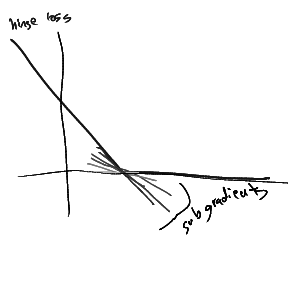

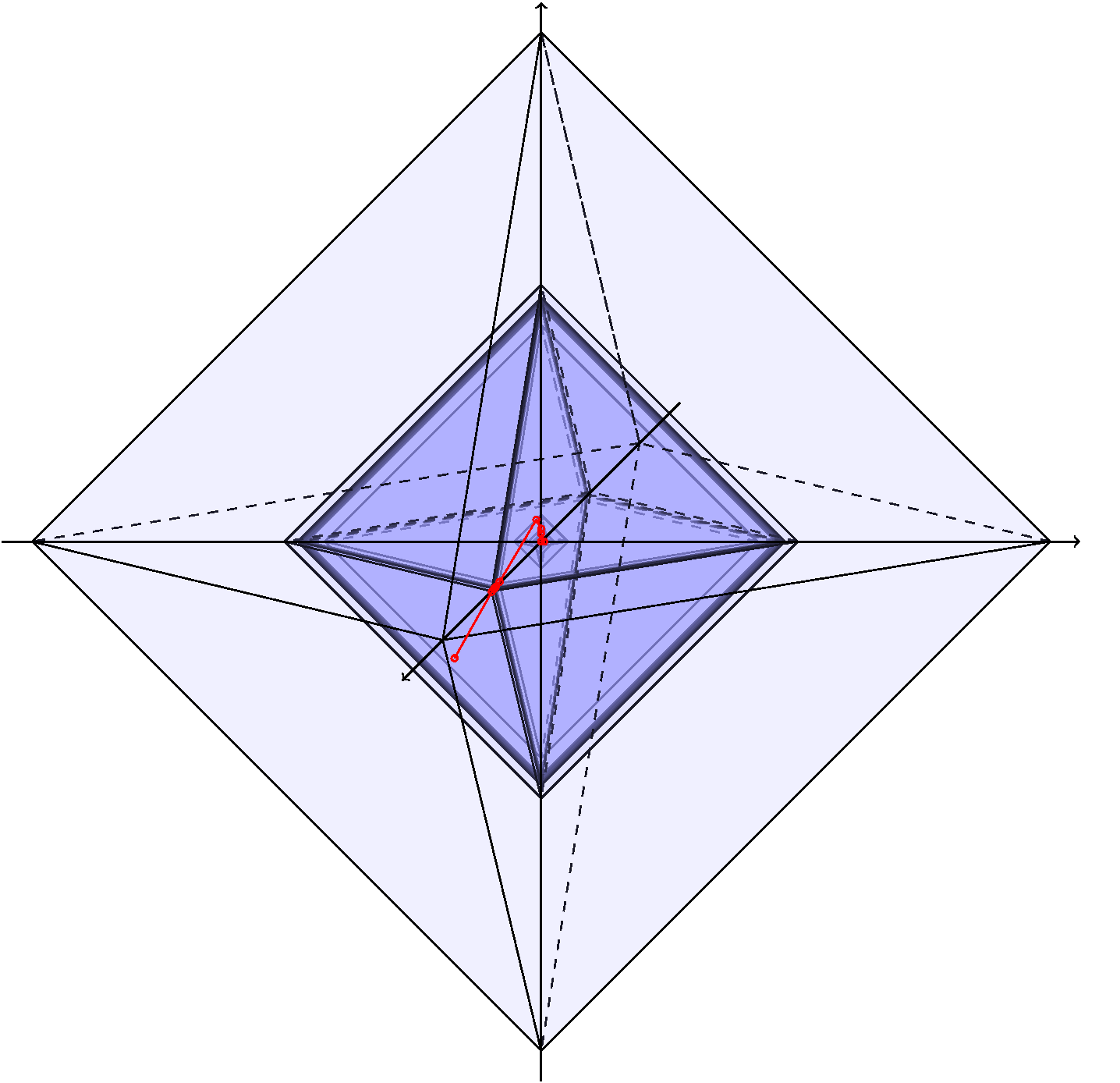

Subgradient — Subgradient generalizes the notion of gradient/derivative and quantifies the rate of change of non-differentiable/non-smooth function ([1], section 8.1 in [2]). For a real-valued function, the

First-order methods for regularization — General first-order methods use only the values of objective/constraints and their subgradients to optimize non-smooth (including constrained) function [5]. Such methods that are oblivious to